Sexual morality

This article is one of a series looking at changes in public opinion over the last 50 years, with a focus on culture war topics. In this installment, we’ll look at responses to four questions in the General Social Survey (GSS) related to sexual activity:

- Premarital sex (

premarsx): There’s been a lot of discussion about the way morals and attitudes about sex are changing in this country. If a man and woman have sex relations before marriage, do you think it is always wrong, almost always wrong, wrong only sometimes, or not wrong at all? - Teen premarital sex (

teensex): What if they are in their early teens, say 14 to 16 years old? In that case, do you think sex relations before marriage are always wrong, almost always wrong, wrong only sometimes, or not wrong at all? - Extramarital sex (

xmarsex): What is your opinion about a married person having sexual relations with someone other than the marriage partner—is it always wrong, almost always wrong, wrong only sometimes, or not wrong at all? - Same-sex relations (

homosex): What about sexual relations between two adults of the same sex—do you think it is always wrong, almost always wrong, wrong only sometimes, or not wrong at all?

As we’ll see, answers to these questions have diverged in the last 50 years. A large majority answer that extramarital and teen sex are wrong, and that has barely changed (although opposition). At the same time, opposition to premarital sex and same-sex relations has declined substantially.

In this article, we’ll look at these trends and decompose them into cohort and period effects. In the next article, we’ll look at the relationship between these responses and religion, both affiliation and attendance.

We’ll start with the first question, on premarital sex.

Premarital sex

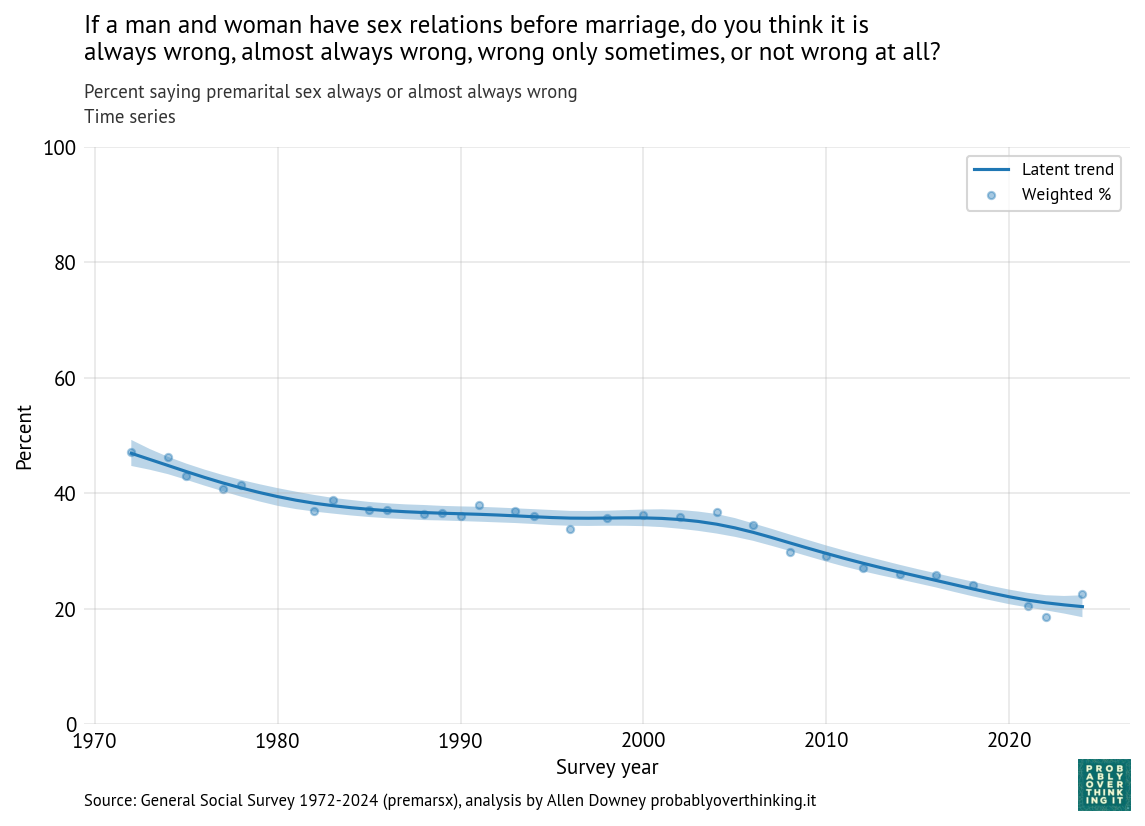

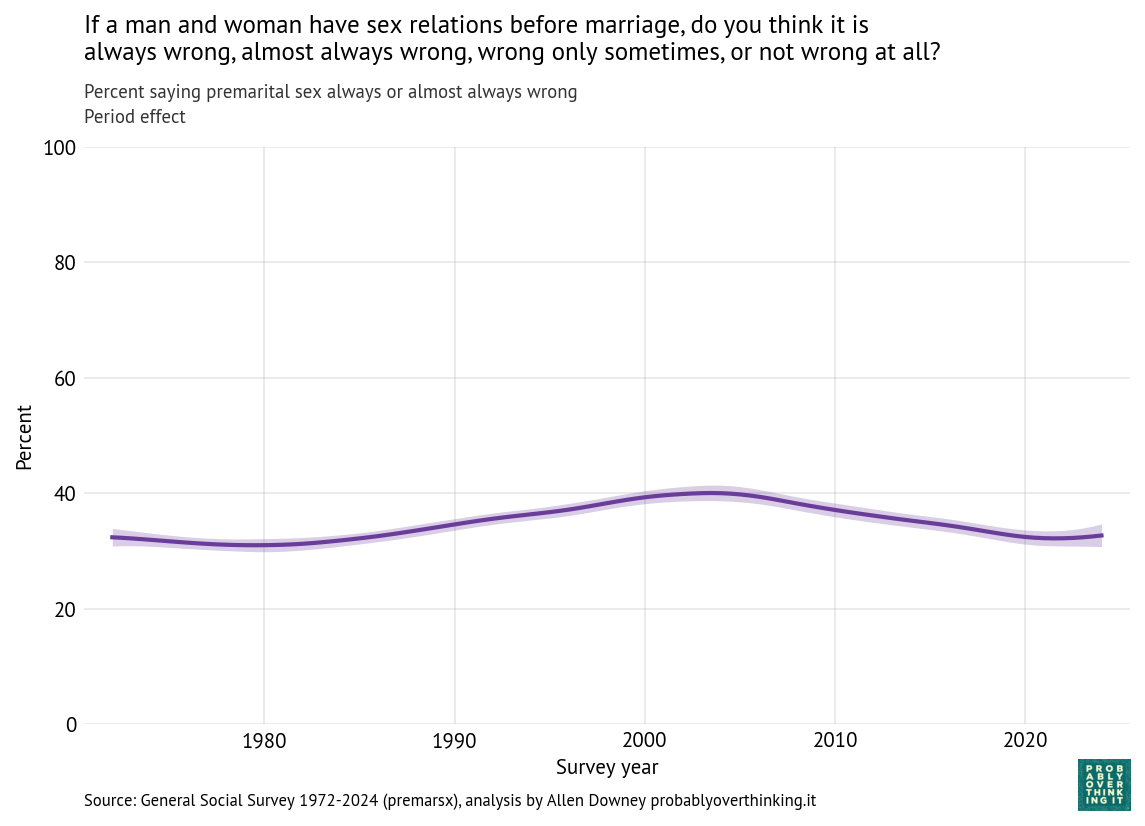

The following figure shows the percentage of respondents saying premarital sex is always or almost always wrong, from 1972 to 2024. The shaded area shows the results from a Bayesian model that estimates the latent trend — that is, a slowly varying underlying level of opposition to premarital sex.

Opposition to premarital sex has declined since 1972, from about 47% to about 20%.

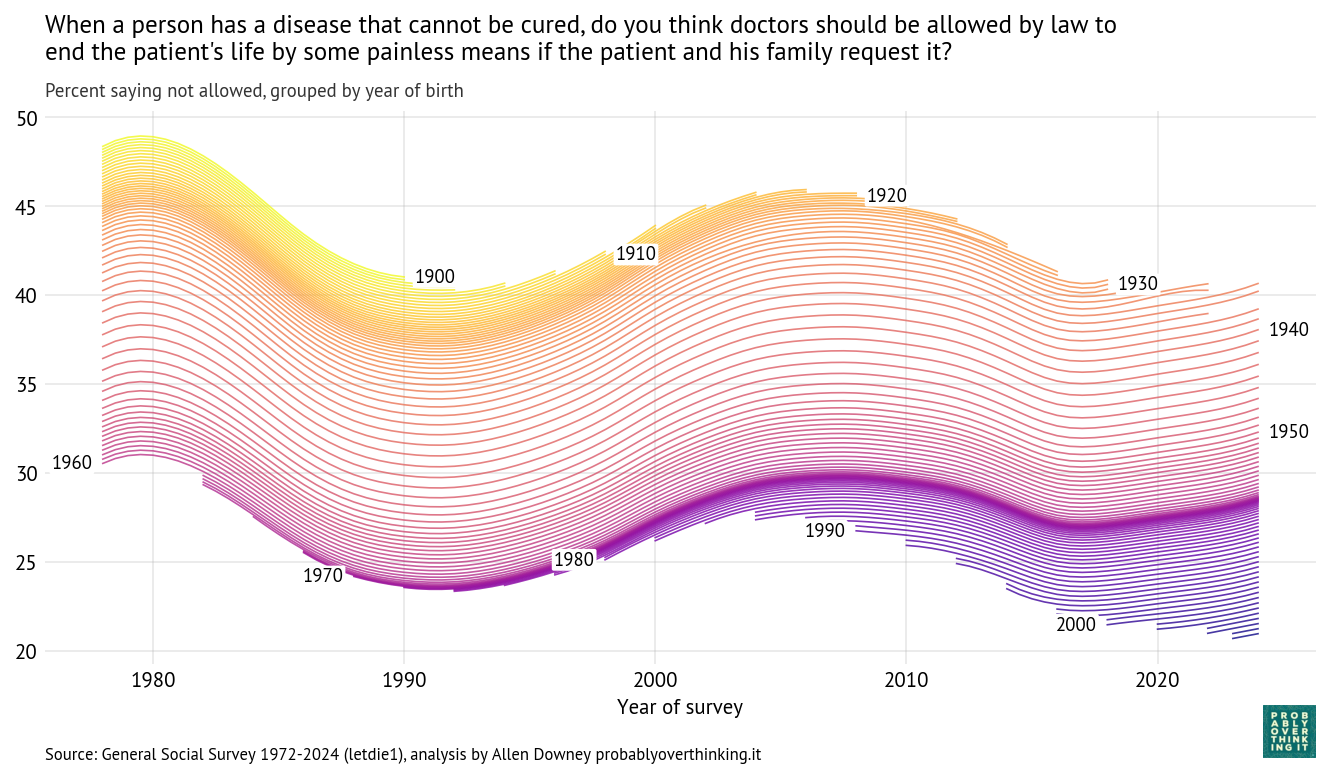

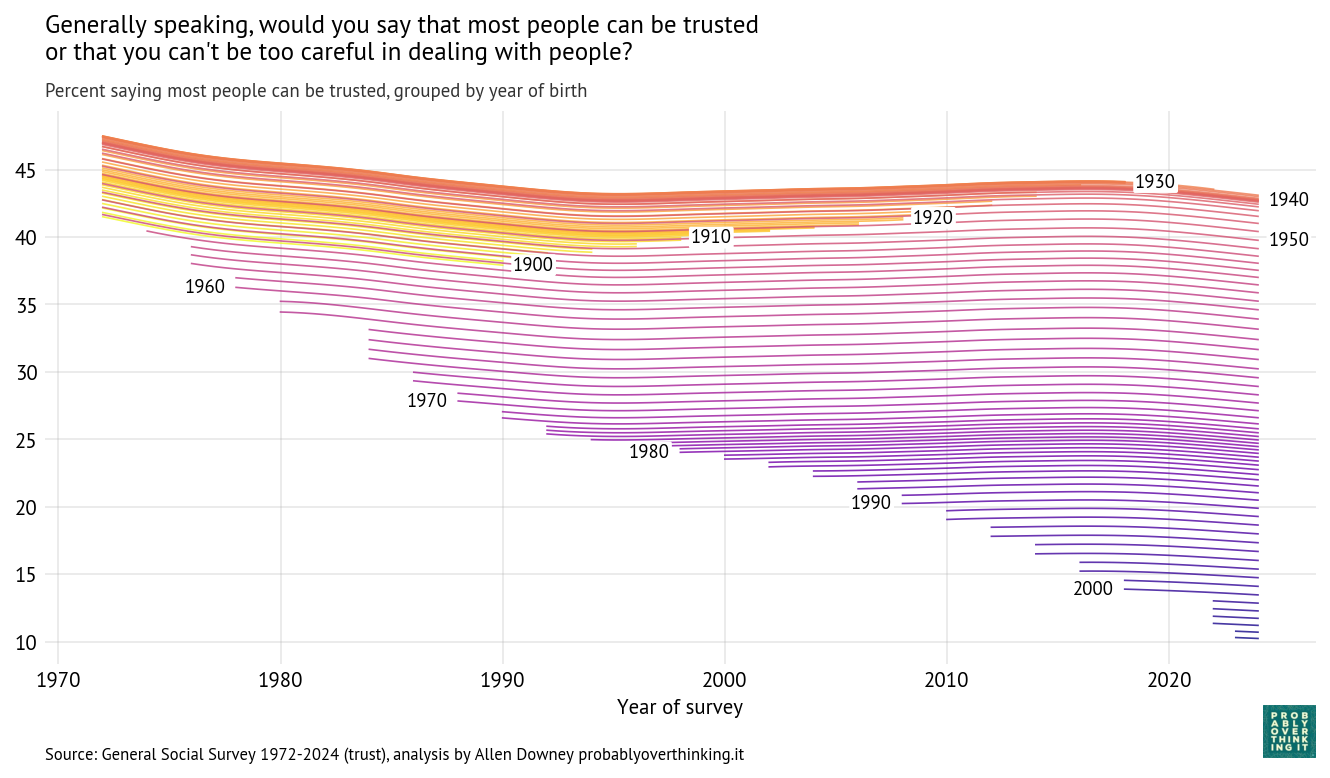

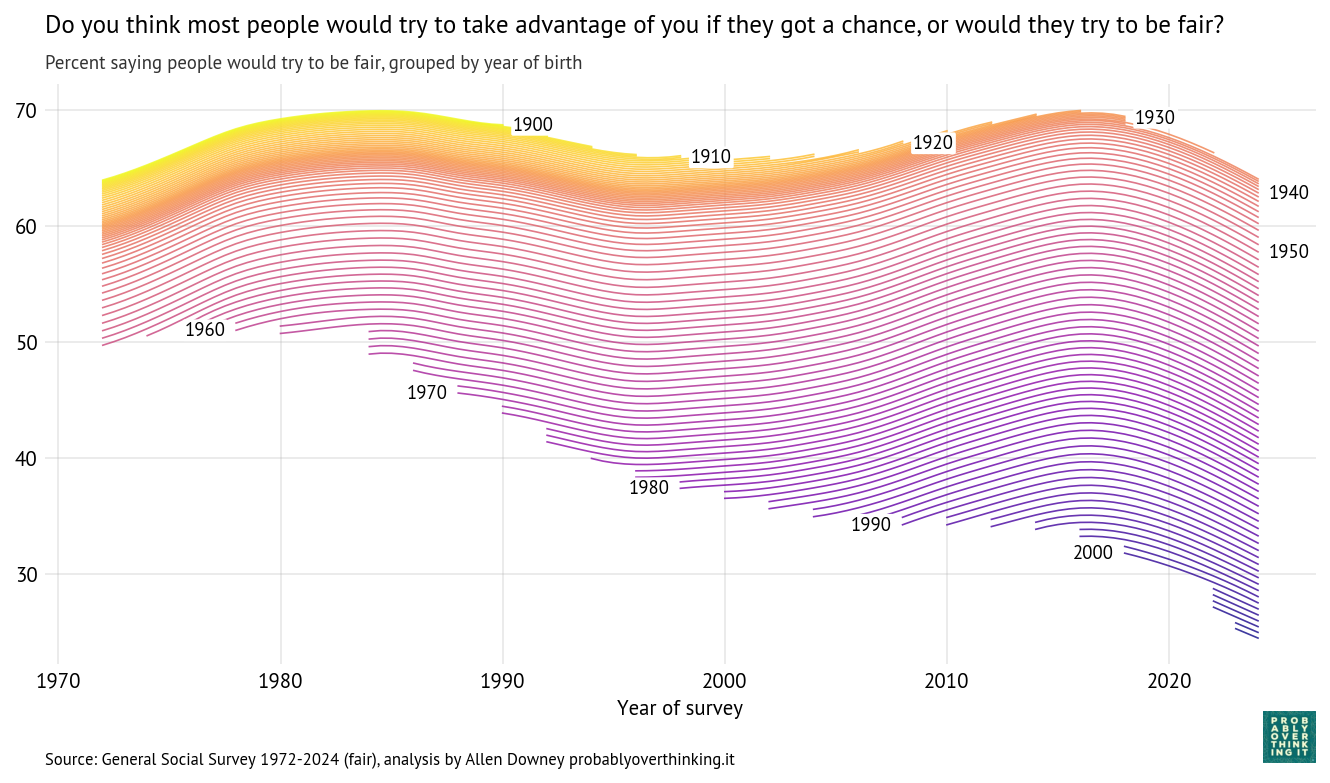

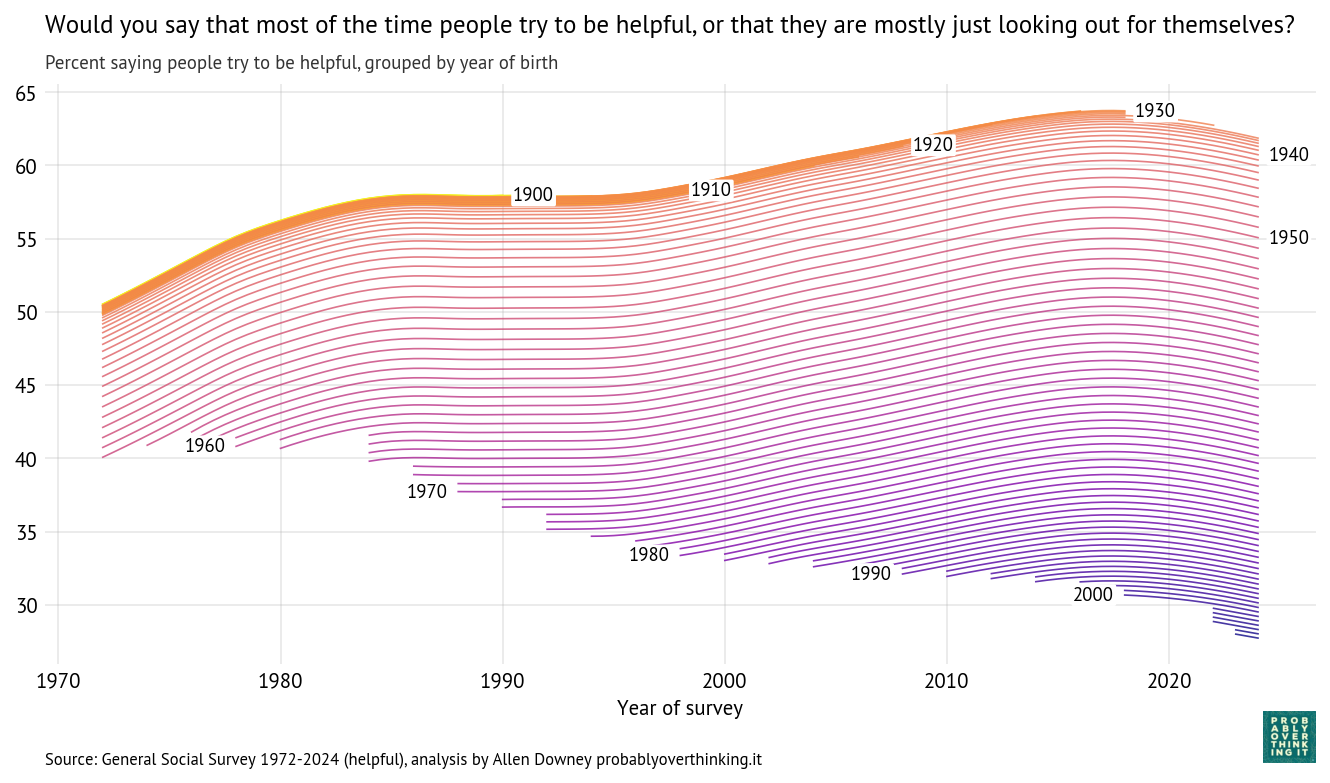

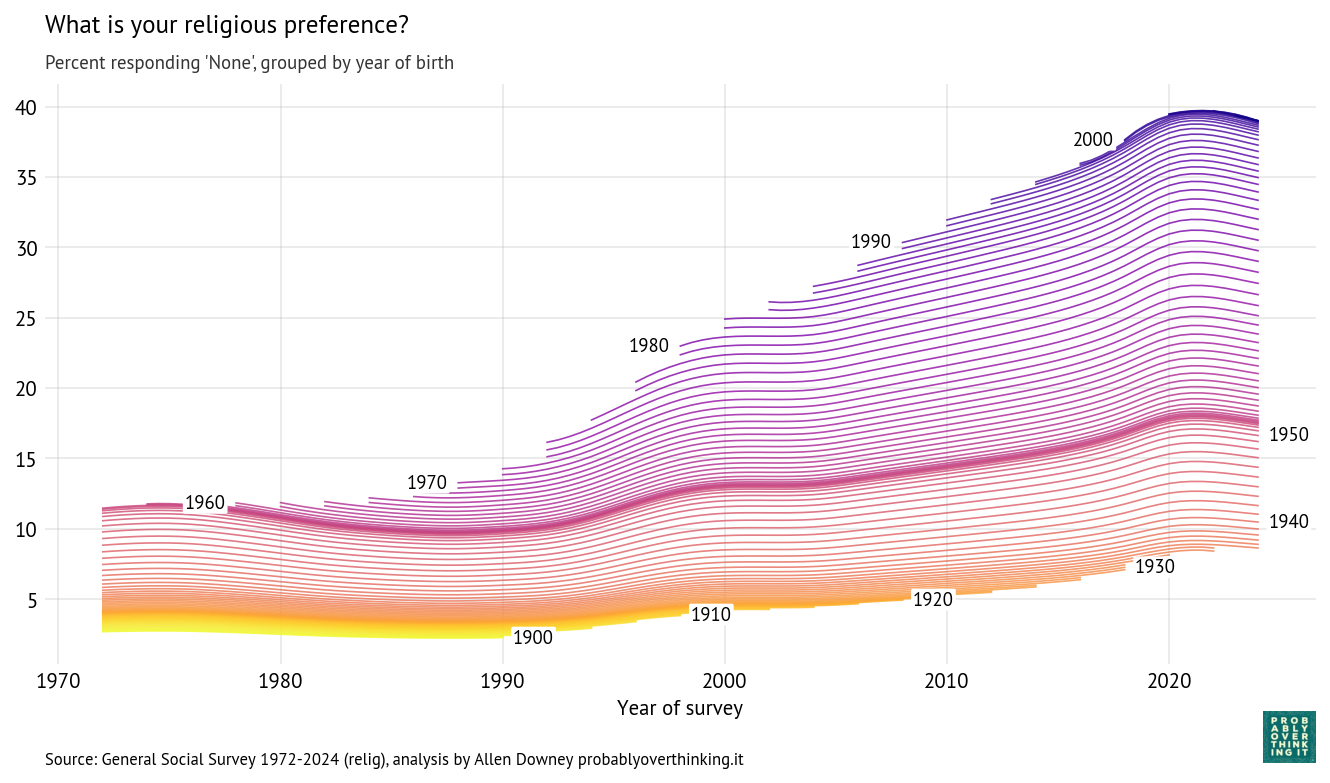

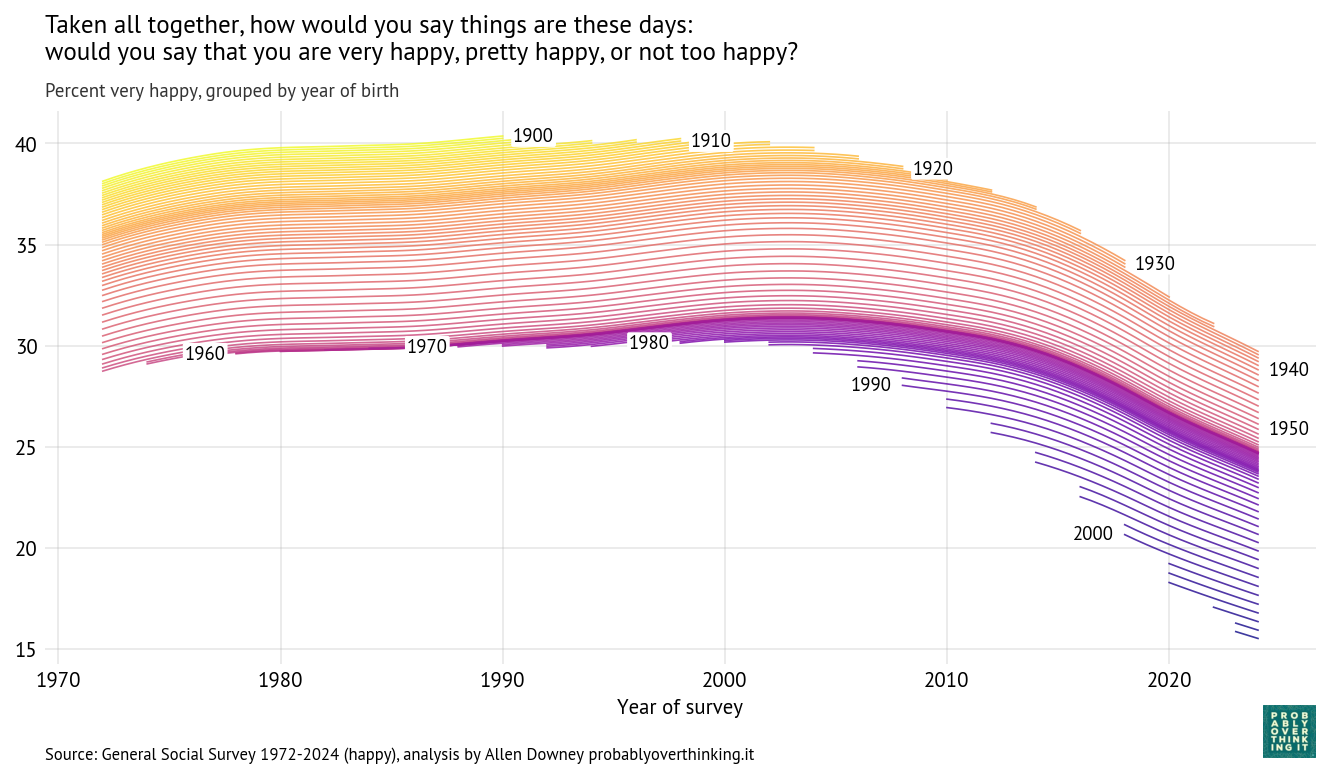

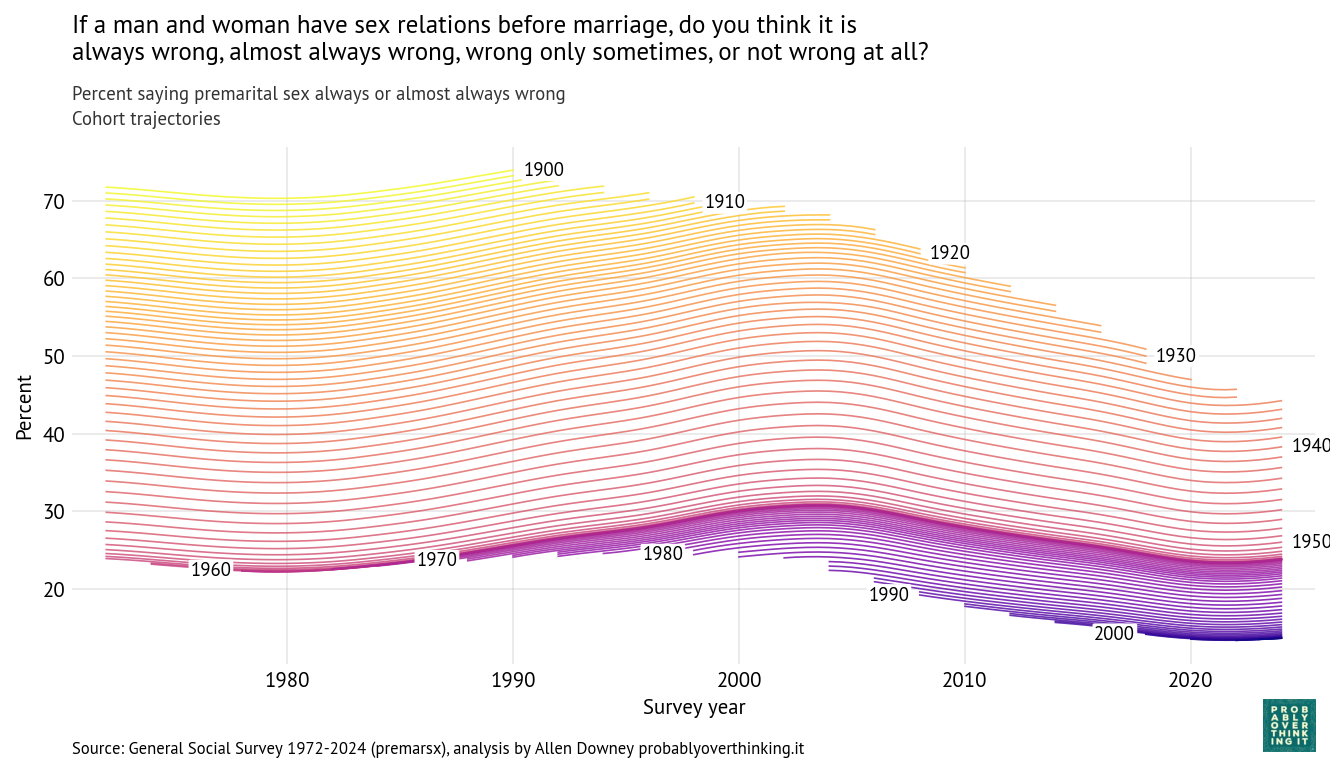

As always, when we see this kind of change over time, it might be caused by cohort or period effects, or a combination of the two. Using a Bayesian model, I estimate a cohort effect for each birth year and a period effect for each survey year. The following figure shows the resulting trajectory for each cohort over time.

Each line represents a single birth year. For example, the yellow line at the top shows the fitted trajectory for people born in 1900, who were 72 when the survey started in 1972 and 90 when they aged out in 1990. The blue line in the bottom right shows responses of people born in 2000, who became eligible to participate in the survey when they turned 18 in 2018.

One pattern is clear: each cohort is less likely than the previous cohort to say that premarital sex is wrong. Among people born in 1900, it was more than 70%. Among people born in the 2000s, it is close to 10%. So that’s a big difference.

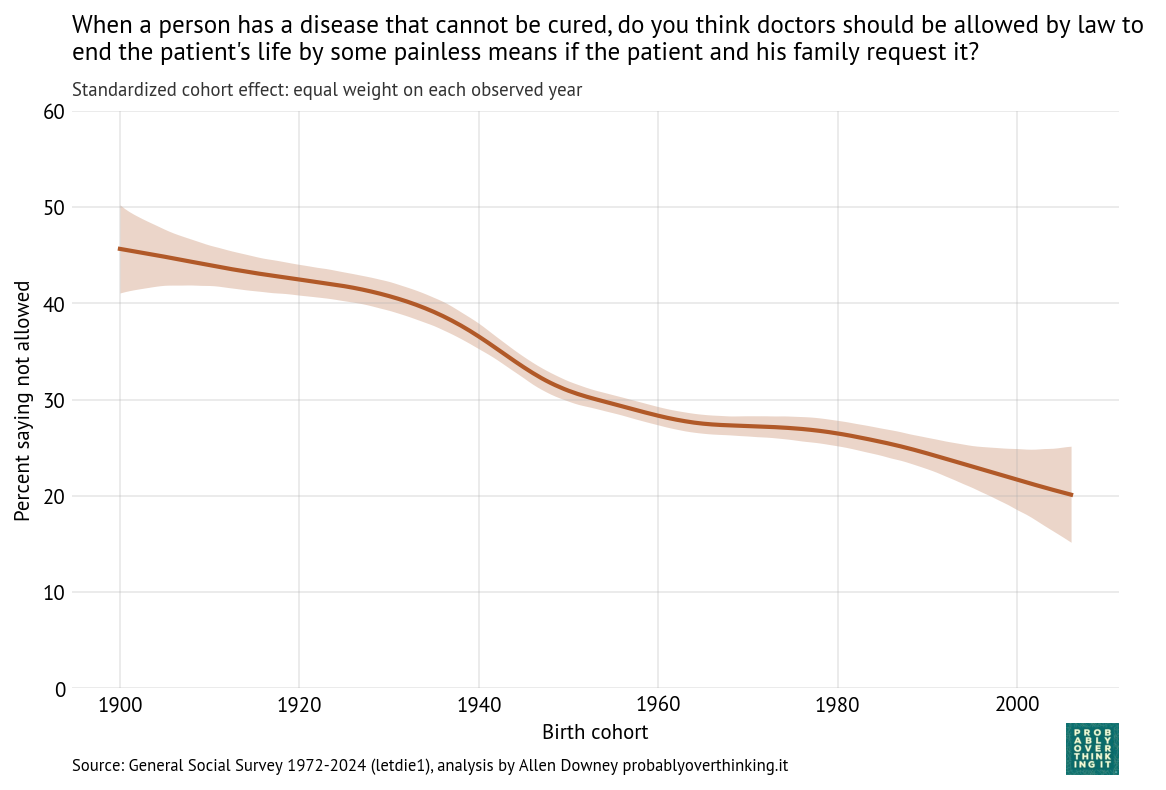

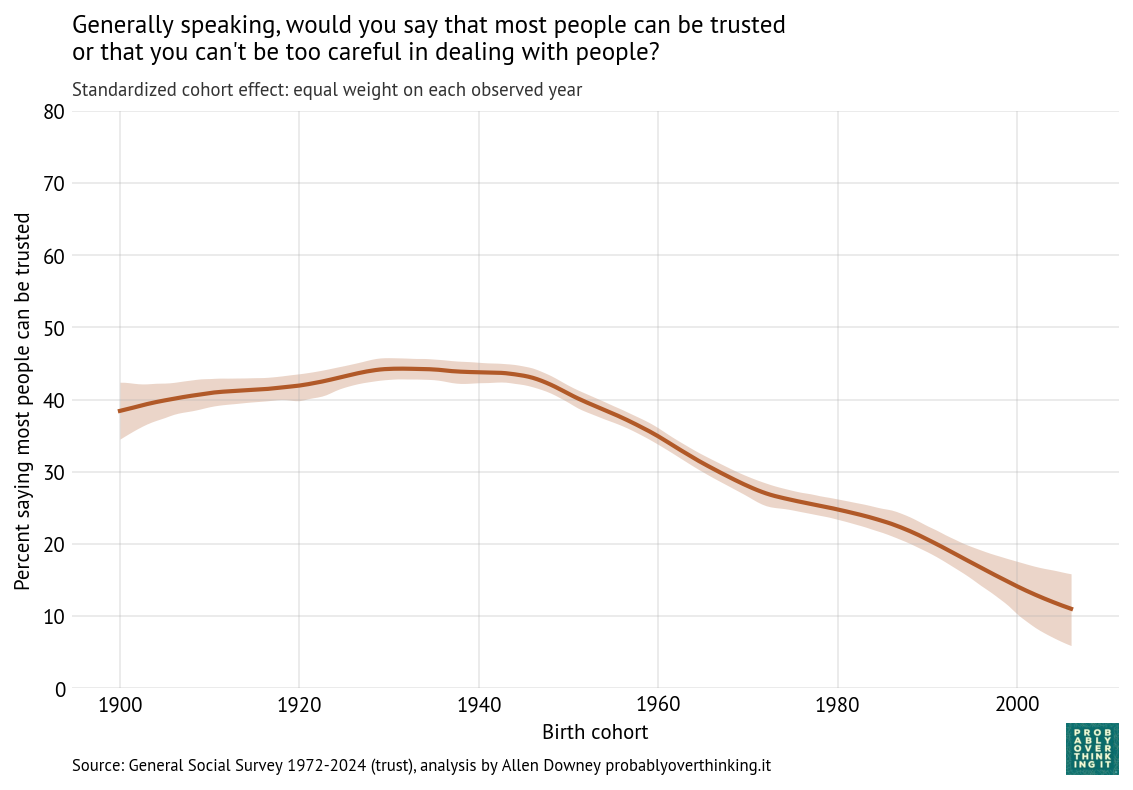

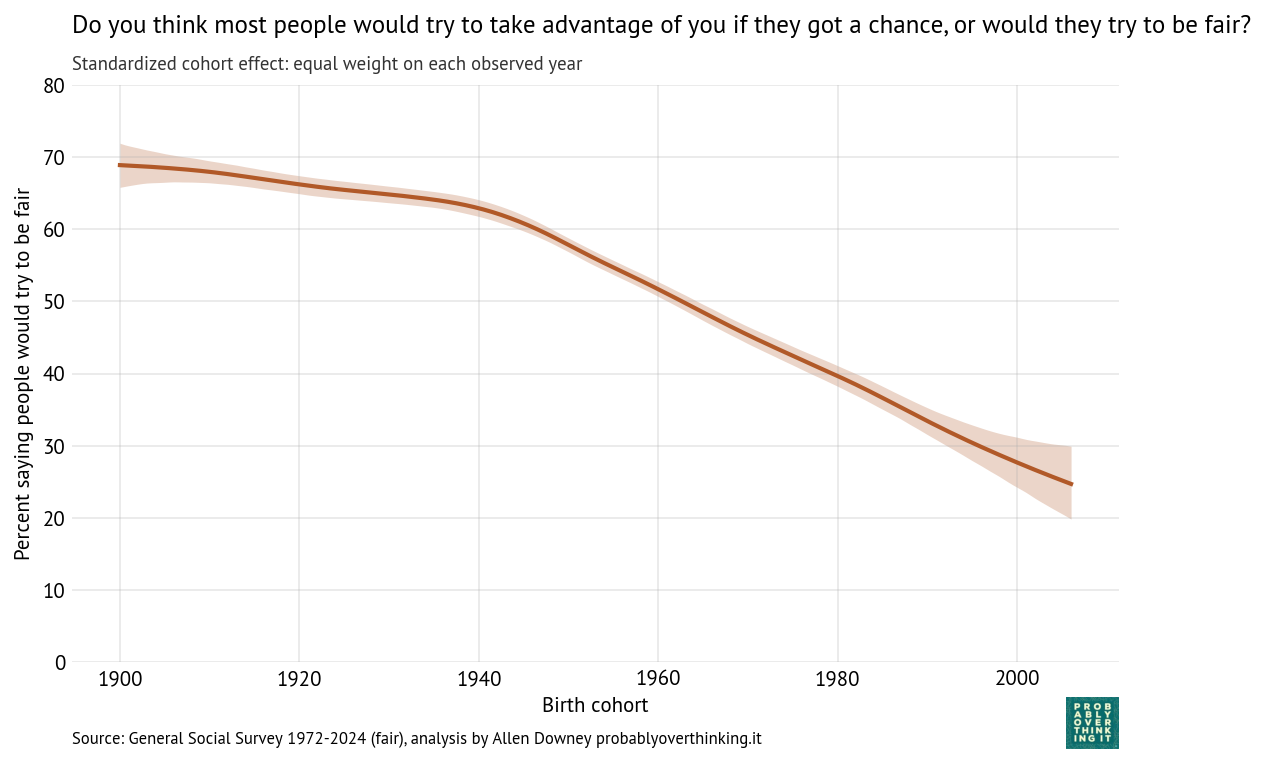

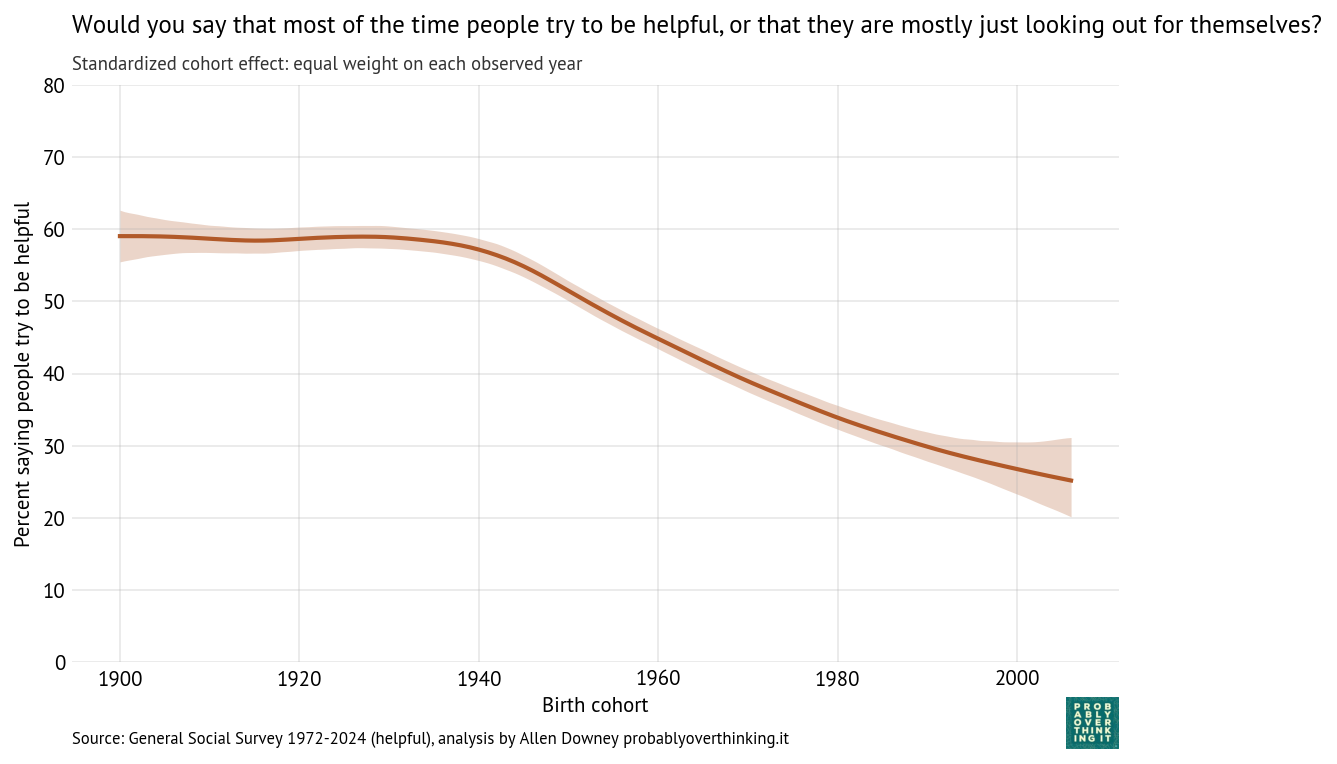

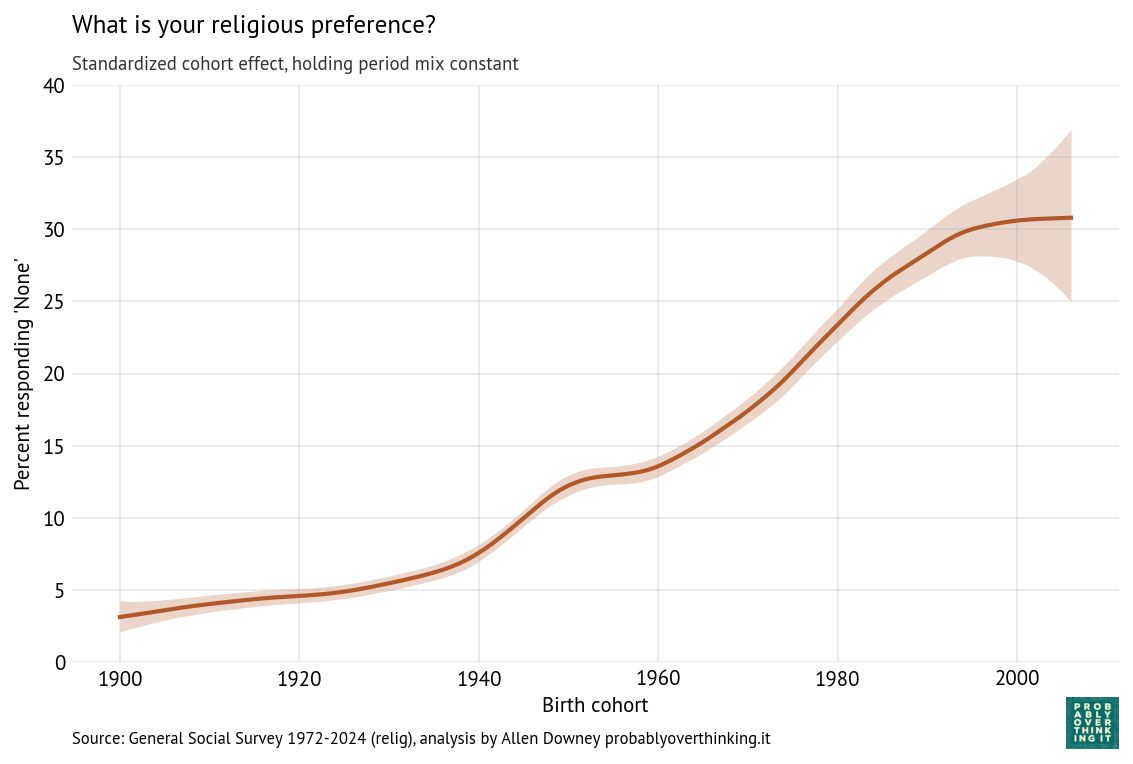

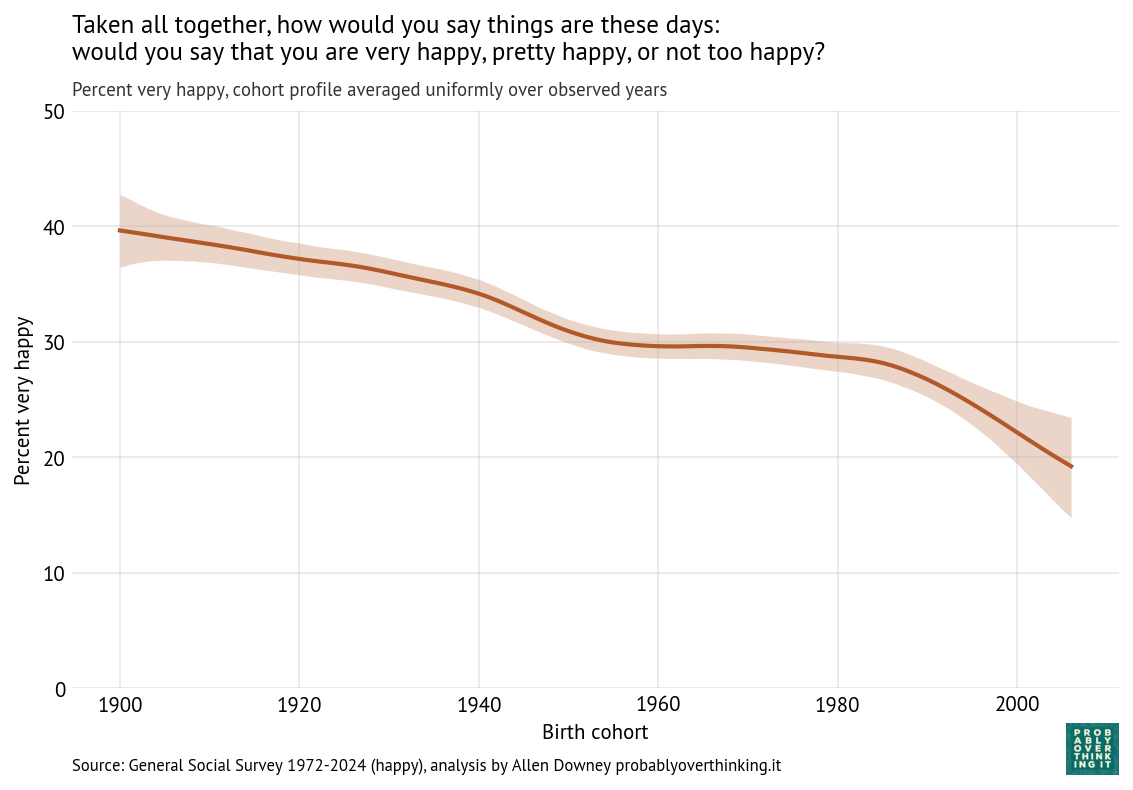

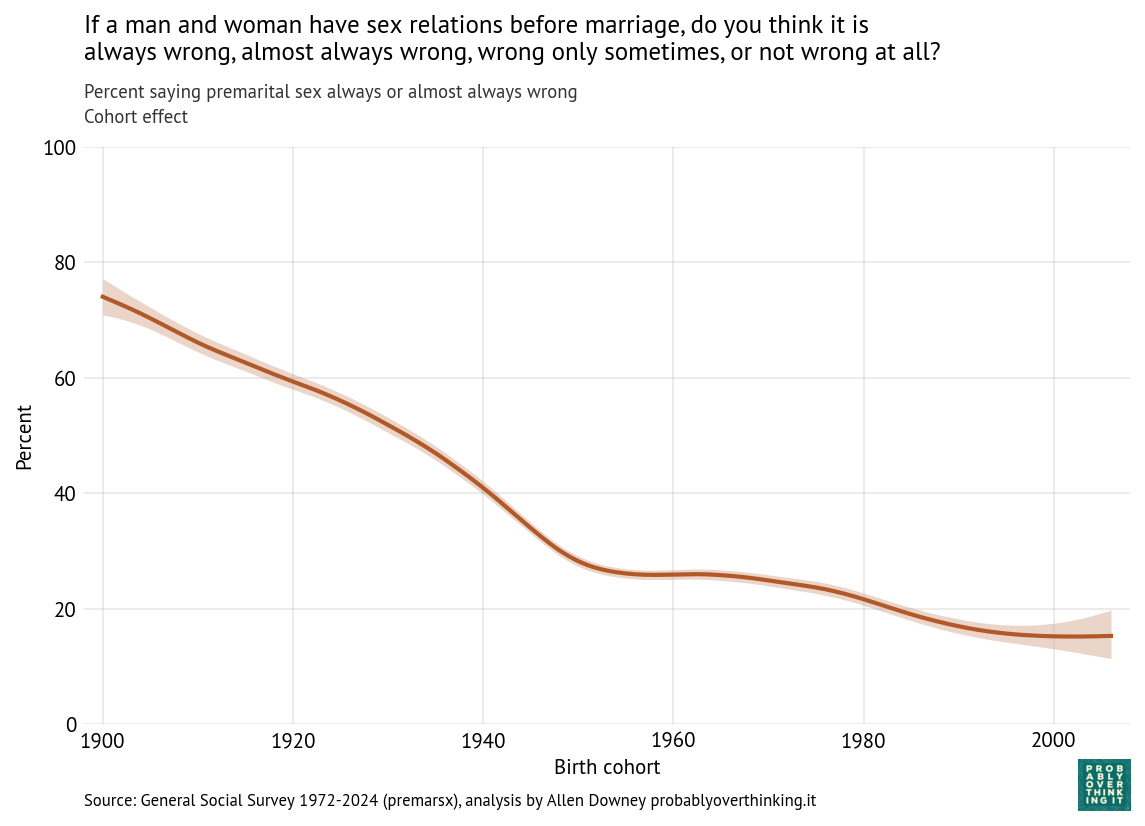

From these results, we can estimate the cohort and period effects separately. The following figure shows the cohort effect, standardized to control for the period effect by simulating responses as if every cohort was interviewed during every iteration of the survey.

The decline was steepest between the cohorts born in 1900 and 1950. After that, it leveled off, then declined again among the cohorts born in the 1980s.

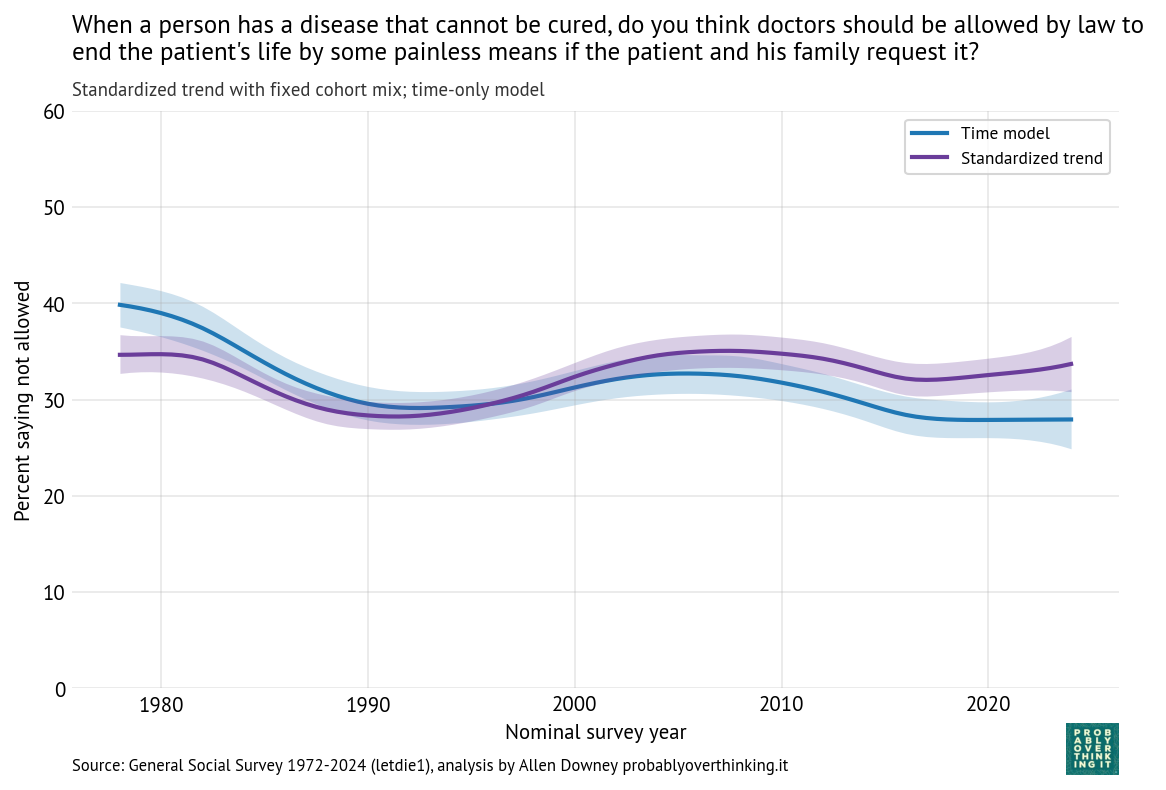

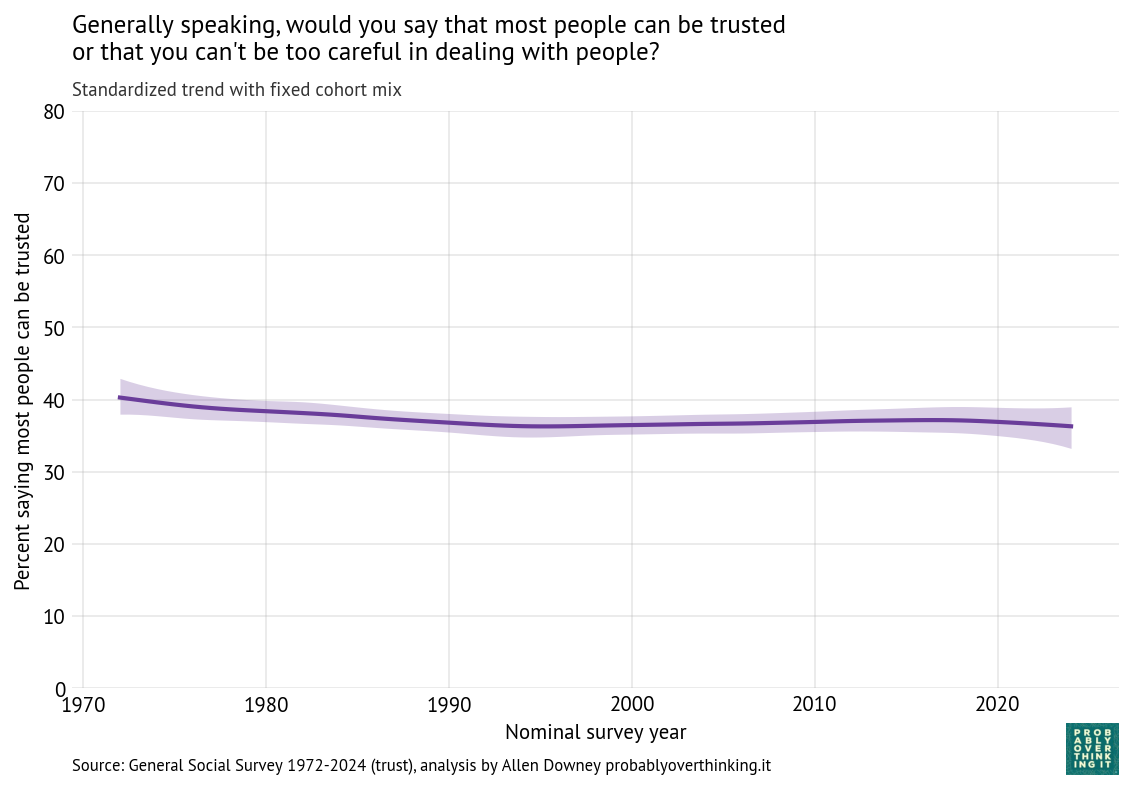

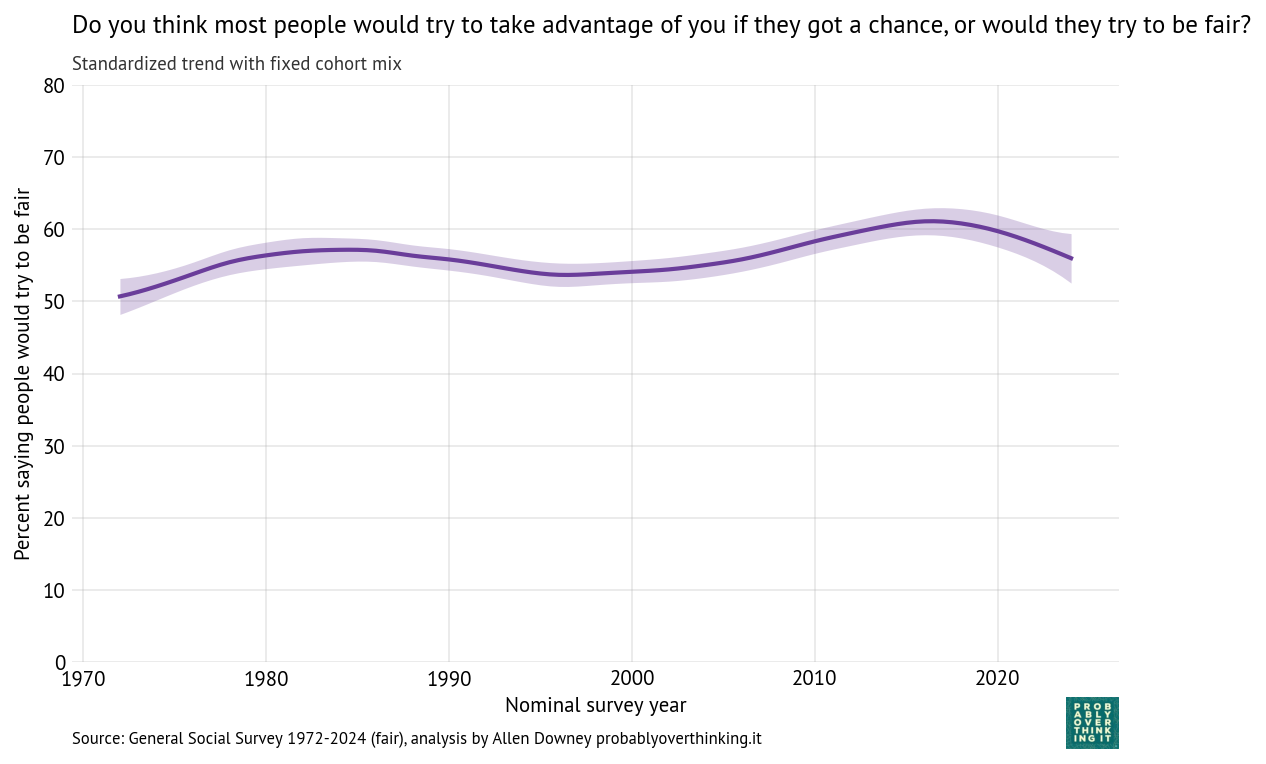

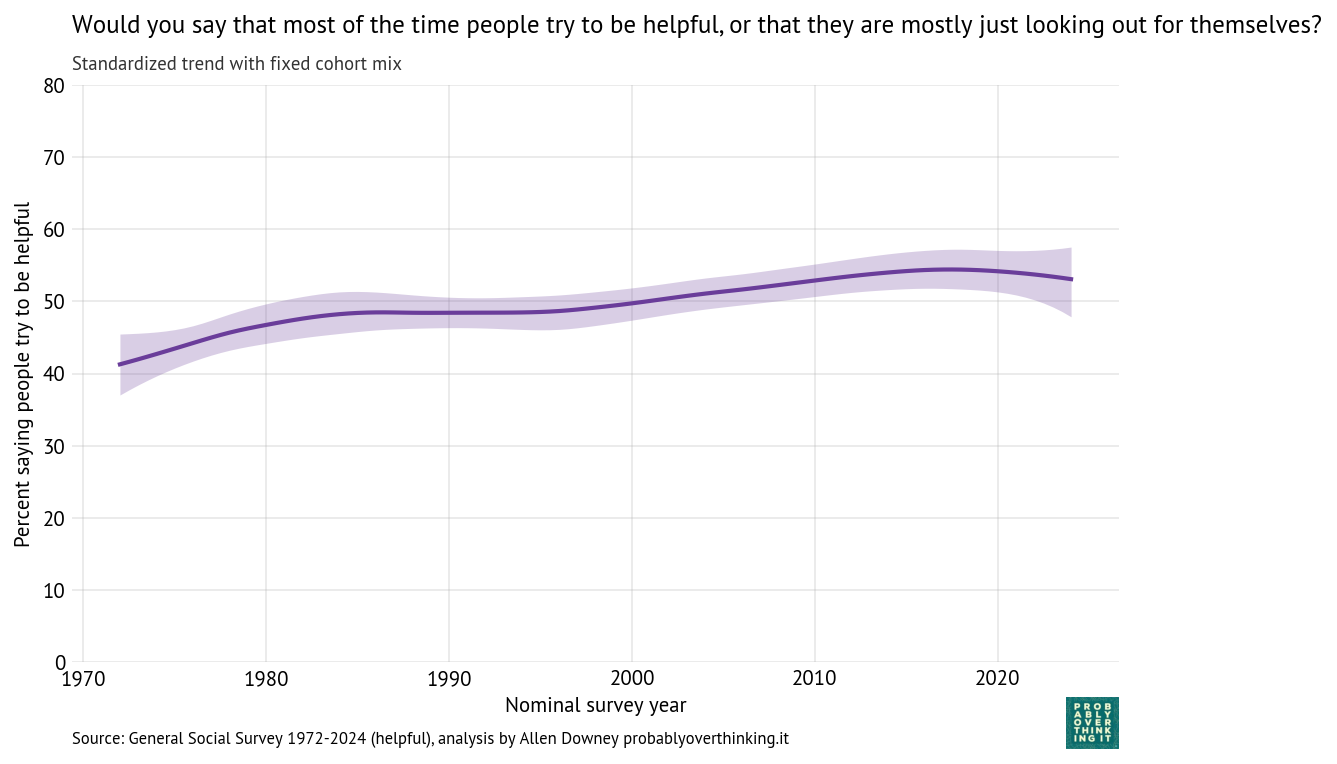

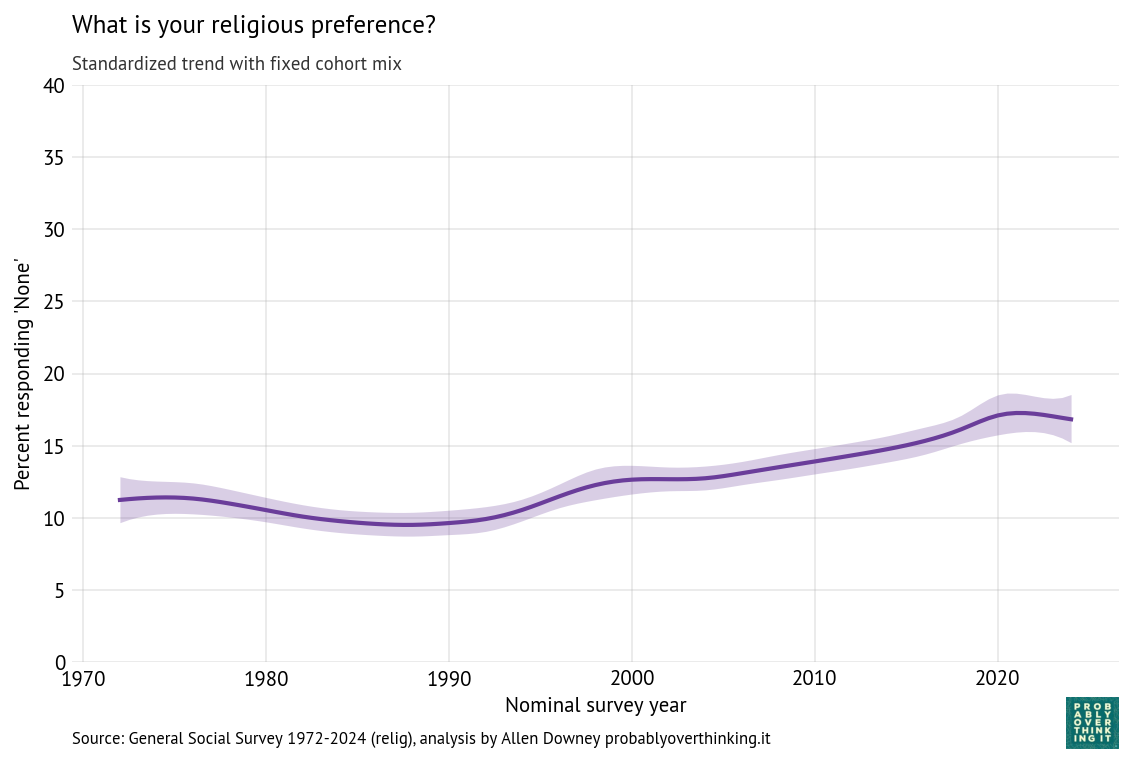

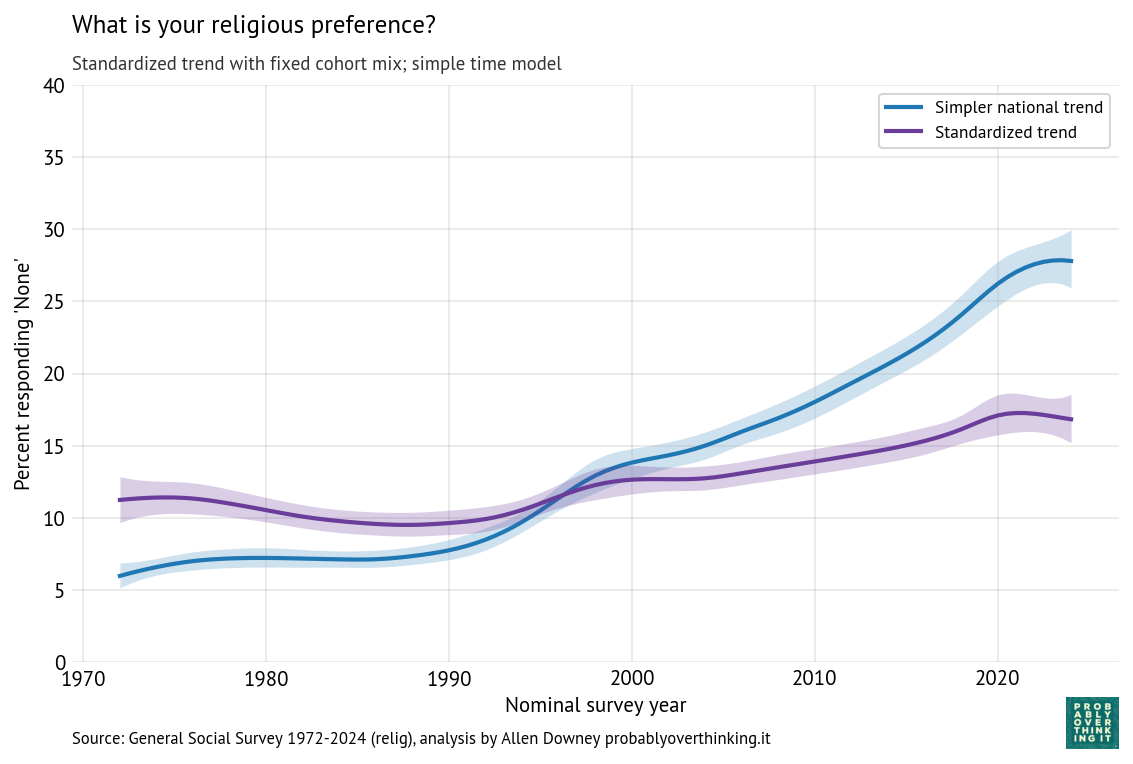

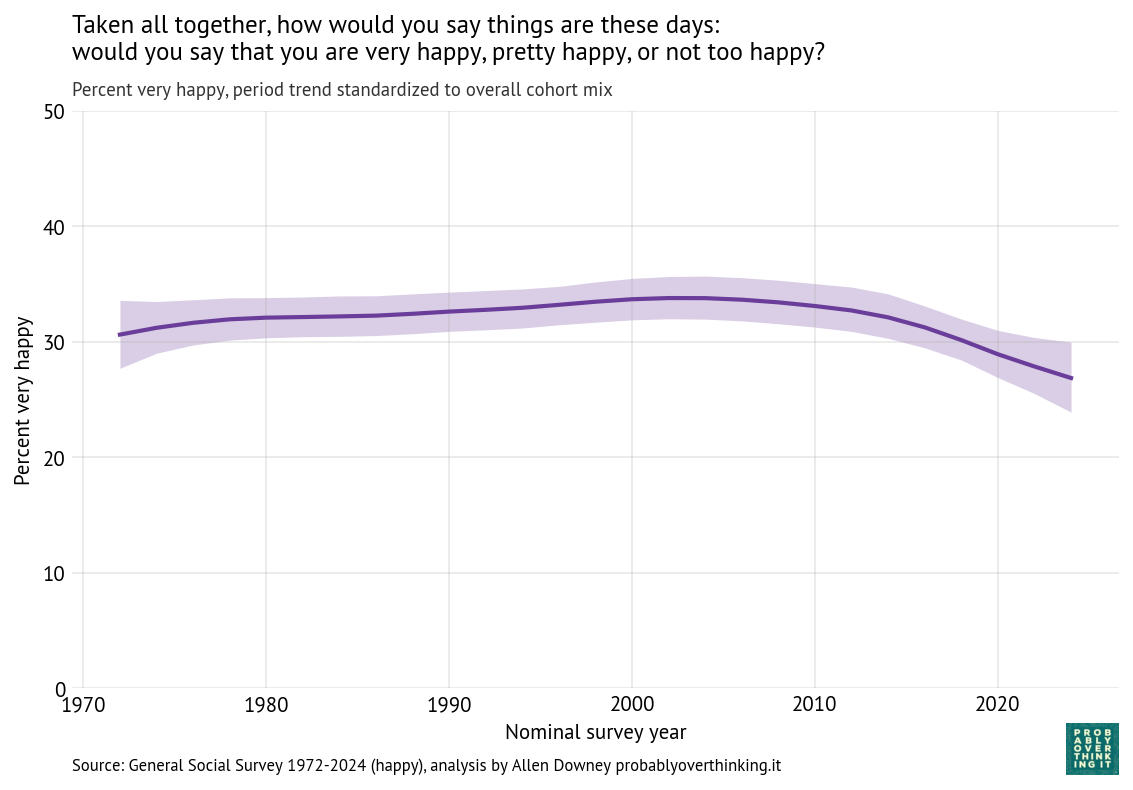

Now we can estimate the period effect, standardized to control for the cohort effect, shown in the following figure.

The period effect is smaller than the cohort effect — about 8 percentage points from peak to trough — and less consistent. Opposition to premarital sex increased between 1980 and 2000, which coincides with increasing awareness of AIDS and public messaging about sexual risk, as well as the rise of the Religious Right and “family values” politics.

But before I speculate about the causes of these patterns, it will be useful to look at the responses to the other questions.

When is sex wrong?

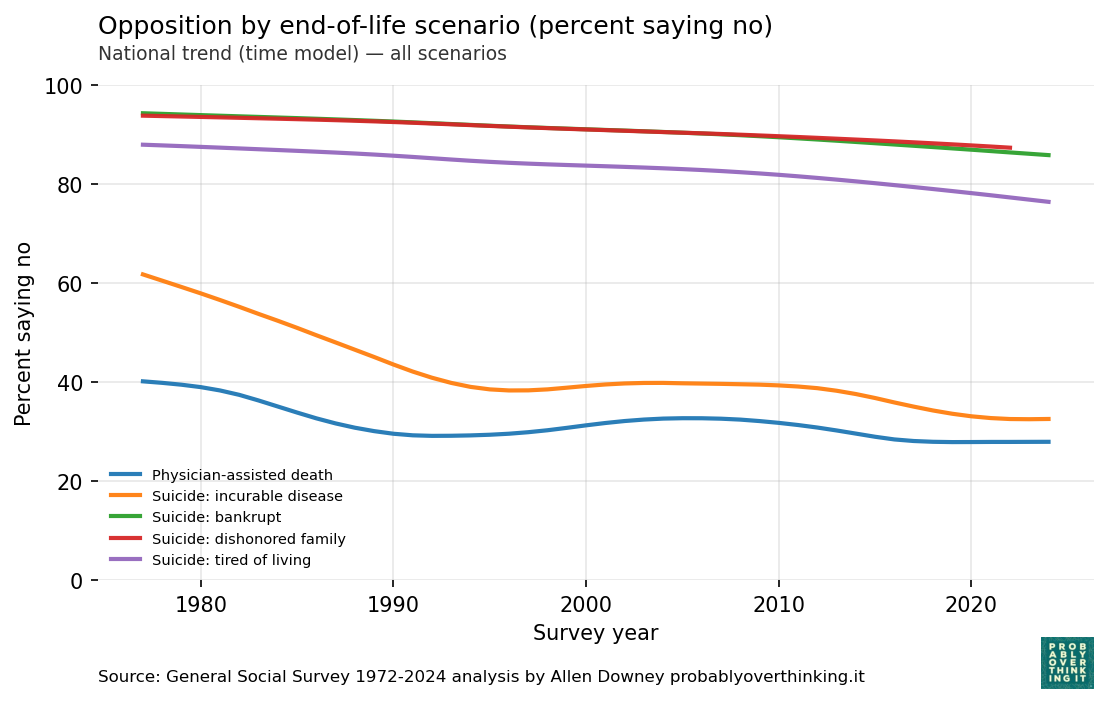

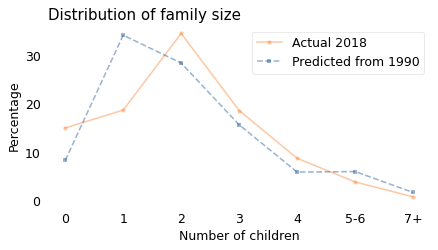

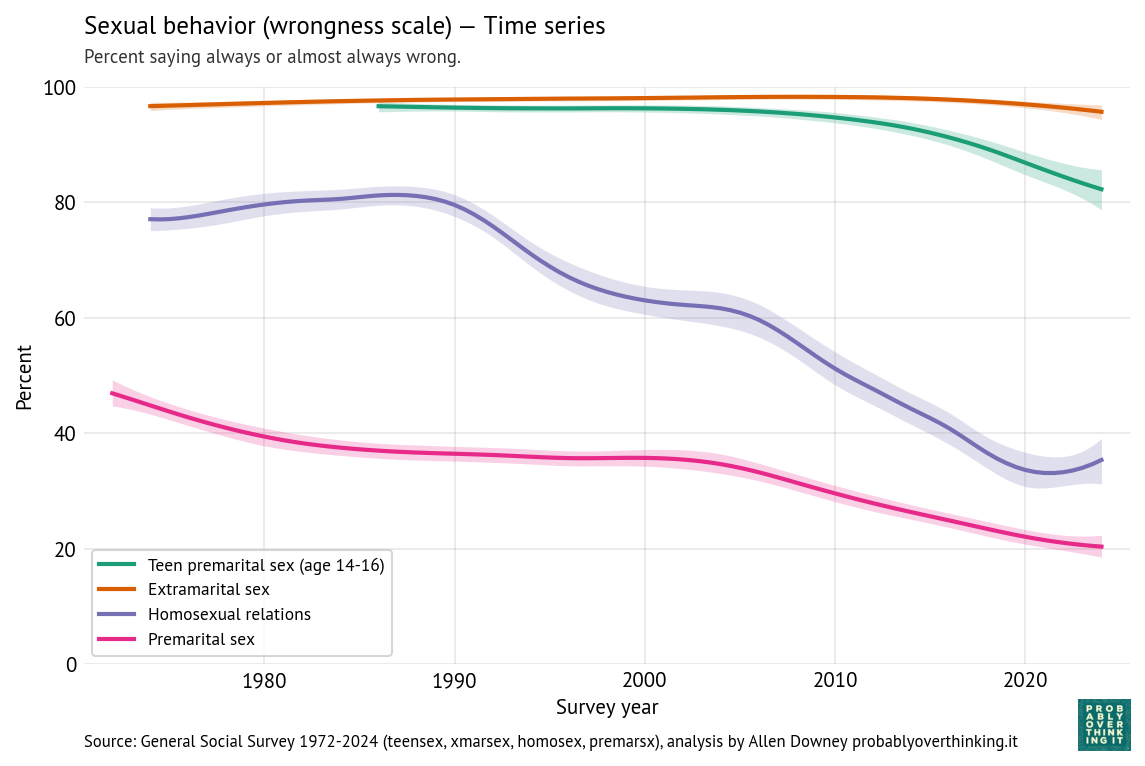

The following figure shows the estimated percentage who think sex is wrong (always or almost always) in each of the four scenarios: premarital, teen, extramarital, and same-sex.

Reading from top to bottom:

- Nearly everyone thinks “a married person having sexual relations with someone other than the marriage partner” is wrong, and the percentage has barely changed in more than 50 years.

- Opposition to teen sex (the question specifies ages 14-16) is nearly as high, although it has declined since 2005 by about 15 percentage points.

- Opposition to same-sex relations was high and mostly unchanged between 1972 and 1990. Since then it has decreased by almost 50 percentage points in 30 years, which is an astonishing speed for this kind of social change. Since 2020, opposition has increased a little.

- Finally, as we’ve already seen, opposition to premarital sex declined substantially since the beginning of the survey.

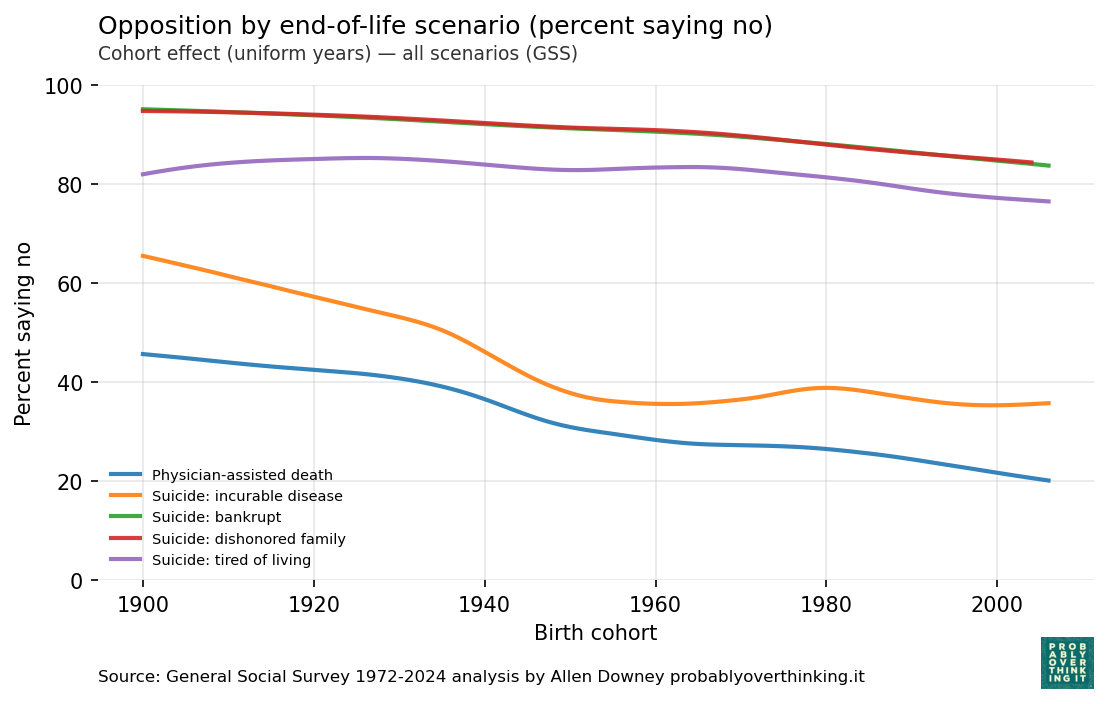

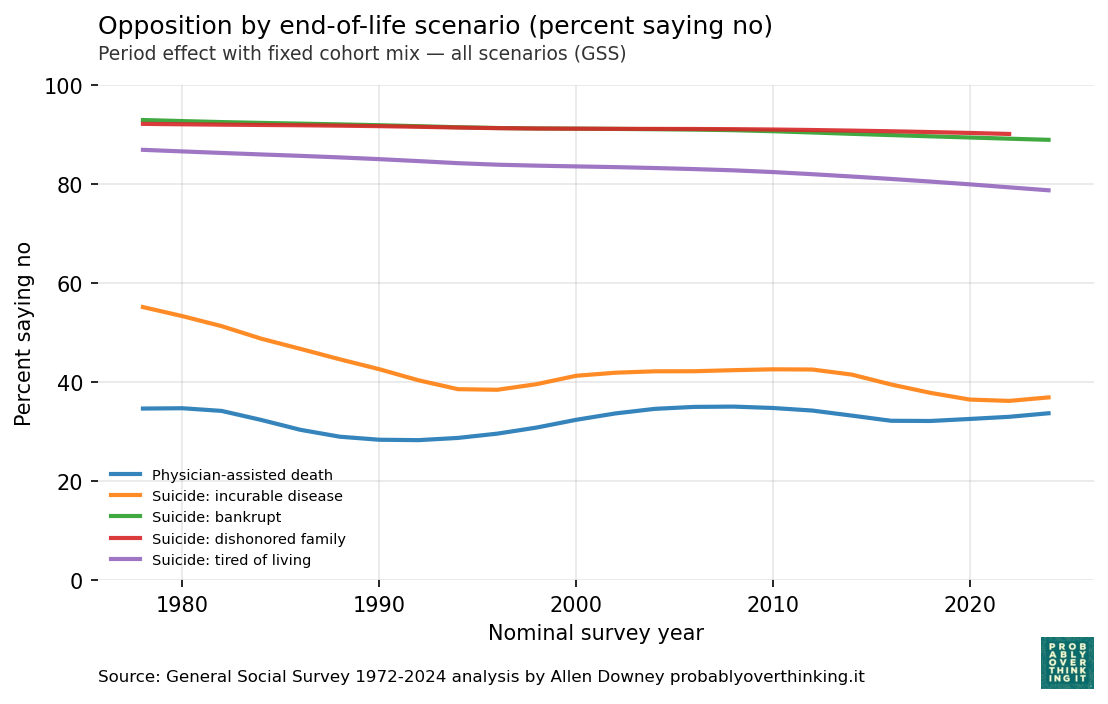

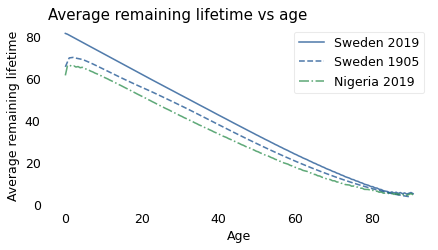

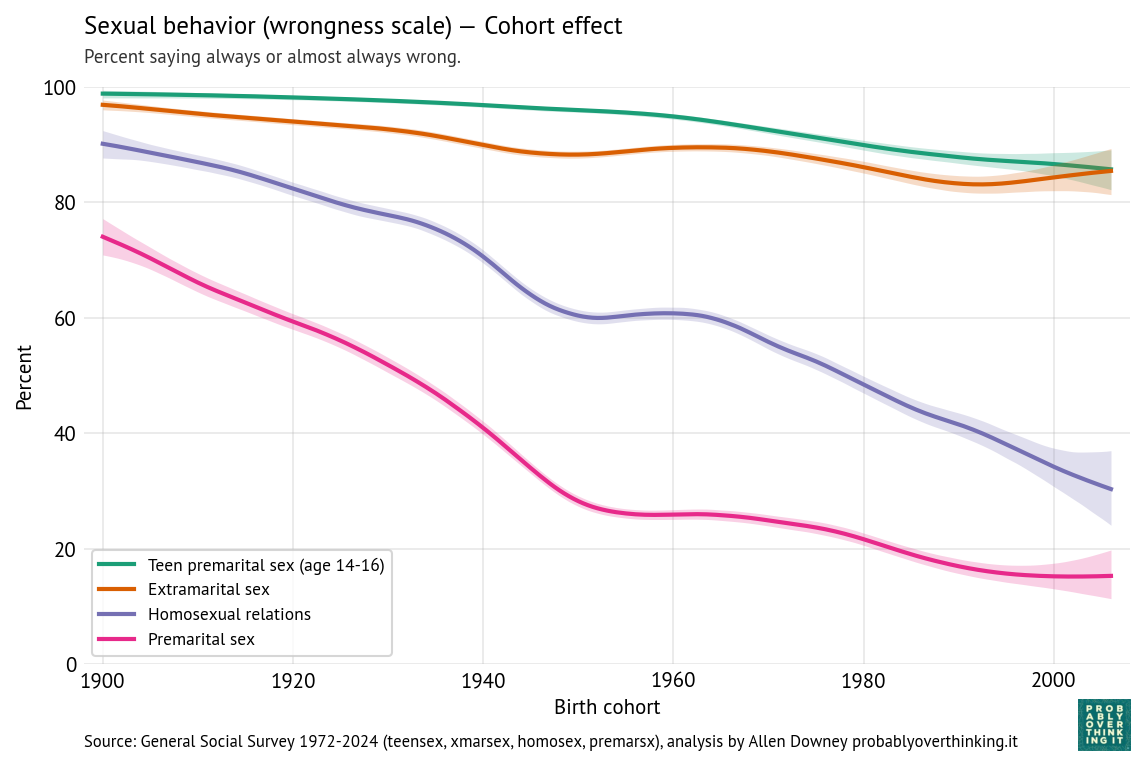

For all four scenarios, we can estimate the cohort effect, controlling for the period effect, shown in the following figure.

For all four scenarios, there is a consistent downward trend in the cohort effect, modest for extramarital and teen sex, much steeper for premarital and same-sex relations.

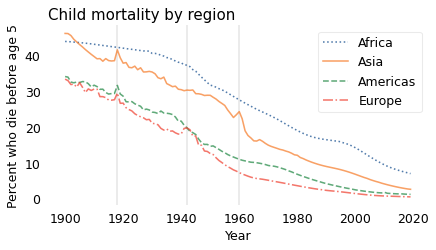

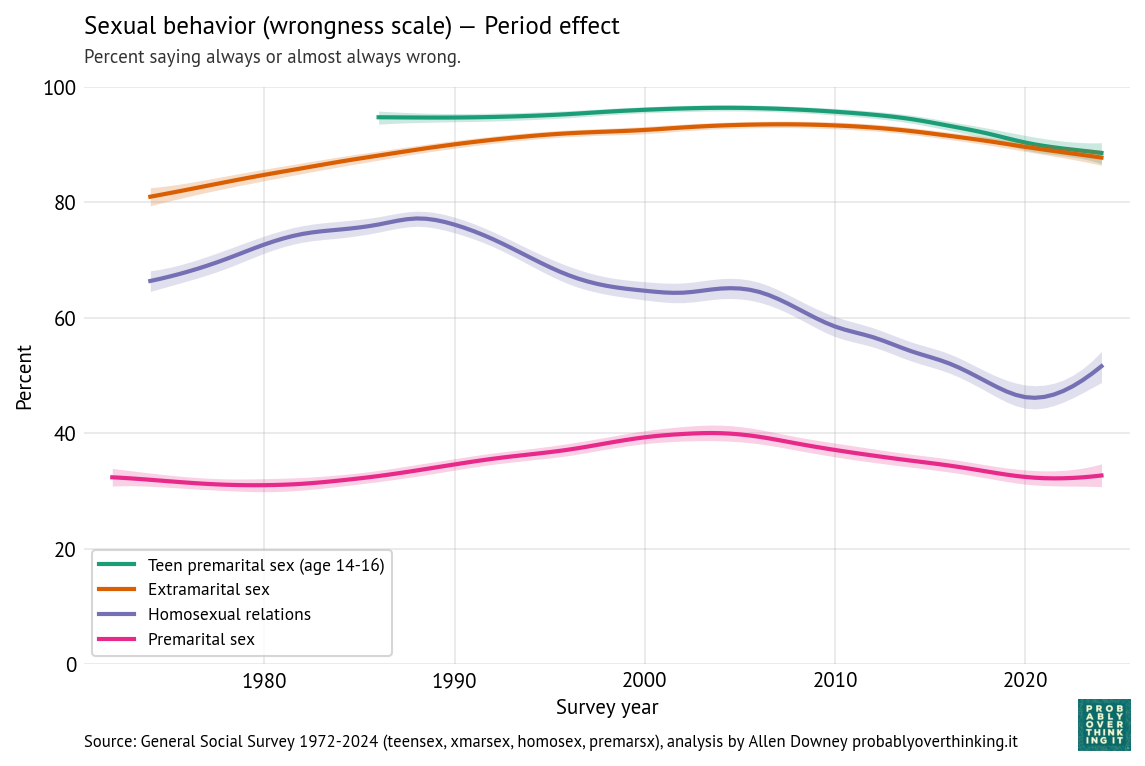

The following figure shows the period effects.

After controlling for the cohort effects, the remaining period effects are more modest.

- Opposition to teen sex is mostly unchanged, with some decline since 2010.

- Opposition to extramarital sex increased between 1972 and 2010, and decreased since then.

- Opposition to same-sex relations shows by far the largest period effect, increasing between 1972 and 1990, and decreasing since then — although increasing again since 2020.

- Opposition to premarital sex has increased and decreased modestly.

In most scenarios, the cohort effect accounts for more of the observed change — but for same-sex relations, the period effect also makes a substantial contribution.

Dogma, morality, and health

To make sense of these patterns, let’s think about what people might mean when they say that sex is wrong.

Taking premarital sex as an example, some people consider sex outside marriage to be contrary to spiritual values or religious teachings. Others might be concerned with sexually-transmitted disease or the social consequences of children born outside of stable families.

And for teens specifically, some object because they see adolescence as a period of innocence that should be protected, believe sexual activity compromises purity or chastity, or think sexual restraint reflects virtues like self-discipline and respect for social norms. Also, some might think teenagers don’t have the knowledge, experience, and impulse control to avoid health consequences of sex, especially pregnancy, or the maturity to handle emotional challenges.

Similarly, some people object to same-sex relations because they see them as contrary to religious teachings, inconsistent with traditional ideas about gender and family, or incompatible with social norms about sexuality.

And people might object to extramarital sex because of the emotional harm it causes, because it breaks a vow, because it threatens families and social stability, or because it violates holy matrimony.

In each scenario, objections arise from different concerns: practical risks and harms, social concerns about norms and stability, and moral or religious beliefs. Looking only at multiple-choice responses, we don’t know what respondents had in mind.

But the differences we see — between teen and extramarital sex on one hand, and premarital sex and same-sex relations on the other — suggest a conjecture: objections rooted in immediate harms and concrete consequences might be more stable over time; objections rooted in social norms, morality, and religion might be more historically contingent.

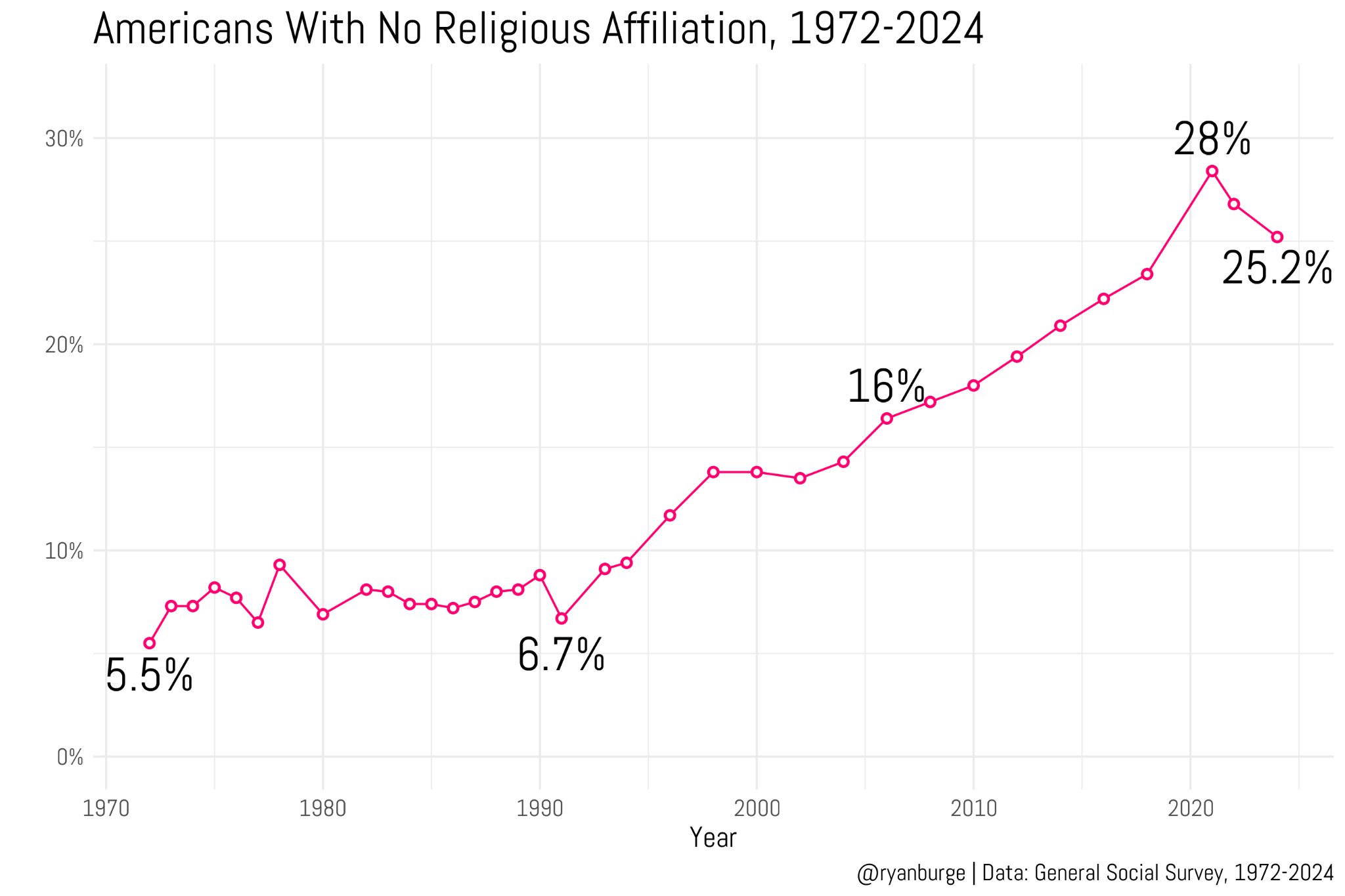

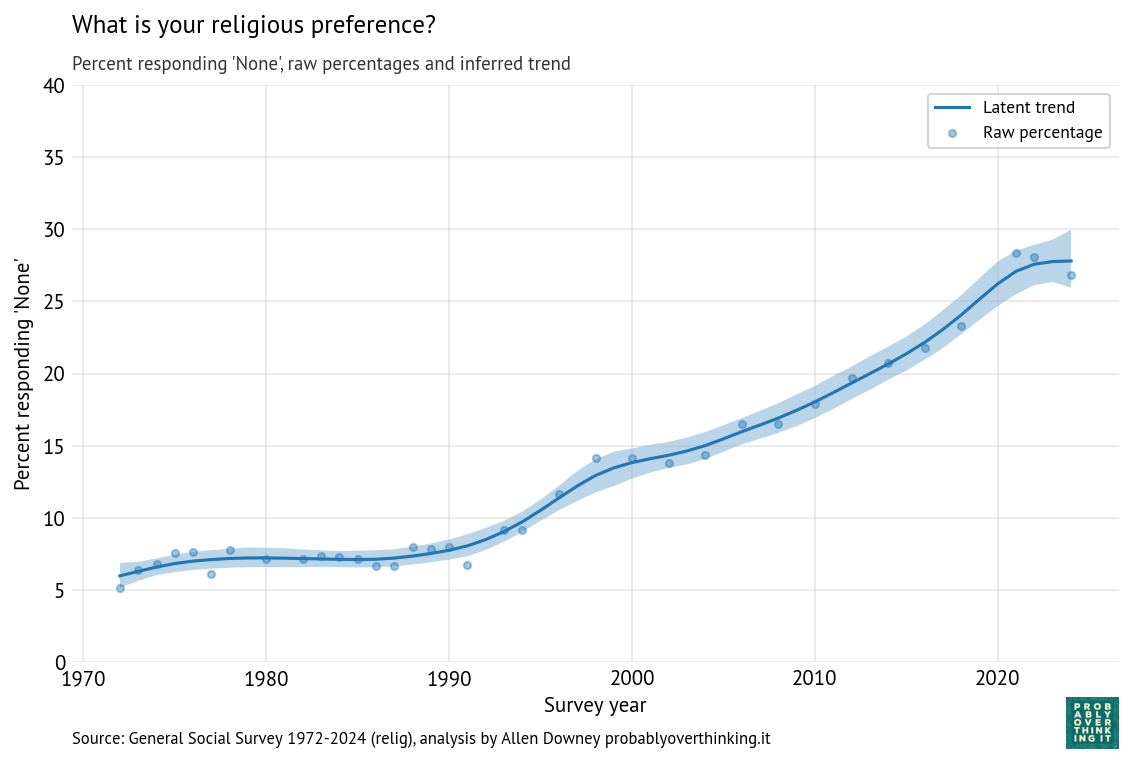

In the next article, we’ll test this conjecture by looking at relationships between religion and attitudes about sex — and how both have changed over time.